INTRODUCTION

TO THE REPORT

The issue

of developing and implementing adequate assessment strategies for nursing and

midwifery education programmes has challenged both state bodies and educators

across the world for over fifty years.

The ACE project was set up to report on current experiences of assessing

competence in pre-registration nursing and post-registration midwifery

programmes. Nursing and midwifery

have undergone rapid and far reaching changes in recent years both in initial

educational requirements and in the demands being made on professionals in

their everyday work. It is

intended that the report will contribute to current developments in educational

programmes to shape the future of the professions to meet the increasing

demands being made upon them.

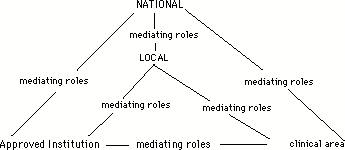

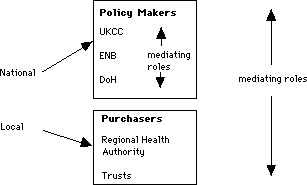

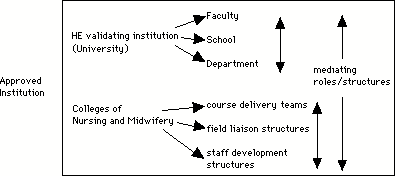

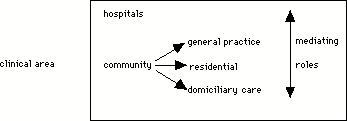

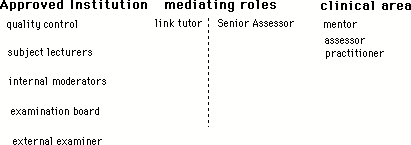

Decisions

are made at every level of the professions, at national, local and in

face-to-face practice with clients that affect both the quality of educational

processes and the delivery of care.

This report intends to contribute to the quality of educational decision

making at each of these levels.

For this reason the report provides both general analyses of structures

and processes directed towards policy interests and also concrete illustrations

of the issues, problems met and the strategies employed by staff and students during

assessment events.

CONTEXTUAL

INFORMATION ABOUT THE RESEARCH PROJECT

The

Setting Up and Operation of the Research

ACE was

funded by the ENB during the period July 1991 to June 1993. It was conducted as

a joint project between the School of Education of the University of East

Anglia, the Suffolk and Great Yarmouth College of Nursing and Midwifery and the

Suffolk College of Higher and Further Education.

The focus was on the

assessment of competence of students on pre-registration nursing courses

(Project 2000 (all branches) and non diploma) and 18 month post registration

midwifery courses (diploma and non diploma). The project conducted fieldwork in nine colleges of nursing

and midwifery and their associated student placement areas, in the three

geographical regions of East Anglia, London and the North East. Appendix A 1 provides full details of

the conduct of the fieldwork. In

brief, there were two phases and through a process of progressive focusing the

issues relevant to the current state of assessment of competence were explored.

During

the first phase, data was collected in all nine approved institutions to

identify issues of national importance operating in their local contexts.

Issues related to the whole of the assessment process were explored including

planning & design, assessment experiences and monitoring &

development. At the end of this

phase an interim report was written which provided a means of articulating

initial findings and of firming up the research questions for the second phase

which then directed the collection of relevant data in greater depth in a

smaller number of fieldsites. This

approach is generally known as 'theoretical sampling' which produces 'grounded

theory' (Glaser and Strauss 1967). [1]

Project

Aims

The ACE

proposal set out with the following aims:

1. To establish the effectiveness of current methods of assessing

competencies and outcomes from education and training programmes for nurses and

midwives.

2. To examine the relationship between knowledge, skills and attitudes

in the achievement of competencies and outcomes.

3. To establish the extent to which profiles from the assessment of

individual competencies adequately reflect the general perception of what

counts as professional competence.

4. To investigate the feasibility of simultaneously assessing

understanding, the application of knowledge and the delivery of skilled care.

5. To collect perceptions of the usefulness of the UKCC's interpretive

principles in helping nurse and midwife educators to assess competencies and

outcomes.

Upon

inspection it soon becomes clear that there is an overlap between each

aim. It is difficult to do one

without also doing the others.

However, they each have their individual stress.

Aim one

stresses 'effectiveness'. If a

mechanism is to be effective, then its intended event must occur.

Thus to be effective, if an assessment procedure designates someone as

being competent, then that person must actually be competent. This is quite different from concerns

with say, cost efficiency. A

system which produces 80 or 90 per cent of people as being competent may still

be considered cost efficient. It

is then a matter of the level of risk that is considered as being

acceptable. In an effective system

the level of tolerable risk is zero.

However, this may not be accepted as cost-efficient. It is the aim then of this project to

critique the assessment of competency from the point of view of

effectiveness. This has the

advantage of making both the risk and the cost or resource implications clear

in any discussion that may then take place on the issue of 'effectiveness' as

against 'cost efficiency'.

Aim two

stresses the complex interrelations of knowledge, skills and attitudes. If the appropriate competencies and outcomes are to be achieved,

then educational and assessment strategies must be attuned to the development

of knowledge, skills and attitudes. None of these are simple categories for

study. They resist the kind of

observation that is appropriate for the study of minerals. Their observable dimensions are highly

misleading and the situation is rather like the iceberg that has nine tenths of

its bulk hidden. Human behaviour

is managed behaviour. That is to

say, it is not open to straightforward interpretation. Impressions are managed by individuals

to produce not only unambiguous communications but also multi-levels of

possible interpretations and deceptions.

What counts as knowledge to one person may not be considered knowledge

at all by another. This is as true

for scientific communities as it is for lay people (Kuhn 1970 , Feyerabend 1975).

Again, there is no easy distinction to be made between 'knowledge' and

'skill'. Knowledge may initially

be thought of as 'theoretical' as distinct from practical action or

skills. Yet, in professional

action, knowledge is expressed in action and developed through action. To analyse professional action into

'skills' and aggregate them into lists required to perform a particular action

may well do violence to the knowledge that encompasses and is expressed in the

whole action. To see professional

action as an aggregate of skills may thus lead to an inappropriate professional

attitude. Knowledge, skills,

attitudes and the processes of everyday action may in this way be regarded as

different faces of the same entity.

It is the aim of this project to begin with the experience of

professional action through which concepts of 'knowledge', 'skills' and

'attitudes' are expressed and defined in practice.

Aim three

stresses the relationship between the assessment process and what it purports

to assess. In short, are the

assessment profiles that result from the assessment process fit for their

purpose? In order to examine this

question it is essential that 'what counts as competence' has been

identified. It may not be that

there is a single 'general perception'.

Rather, there may be a range of acceptable variation in what is

perceived to be 'competence'. This

implies a debate of some kind. One

prime intention of this project then is to describe the debate and discuss the

extent to which assessment structures and processes fit the purposes that are

currently being debated. This in

turn refers the discussion back to questions of effectiveness and of the ways

through which 'knowledge', 'skills' and 'attitudes' are being identified and

defined.

Aim four

stresses the feasibility of assessing understanding and the application of

knowledge at the same time as delivering care. Effectiveness and feasibility are closely allied. It must be feasible for it to be

effective. In short, the aim is

directed towards the relationship between educational processes and care

processes. This may be seen as

presupposing a distinction between the two so that assessing would be an

additional burden to be carried at the same time as delivering care. The aim of this project is to explore

the professional process in terms of its dimensions of care and education: is the one aggregated to the other, or

are they indissoluble faces of the same coin?

Aim five

is different in kind to the preceding four. This aim has a survey dimension to it where the others are

interpretive and analytic in orientation. For ease of reference the UKCC's

interpretative guidelines are reproduced in appendix C 2. It is a straightforward matter of

asking a range of individuals in the participant institutions whether the

guidelines have been found to be useful.

Whilst the UKCC's interpretive principles acted as a focus of this aim,

it became apparent from interviewing that the inclusion of comments on the

usefulness of national guidance in general ( i.e. including ENB guidance)

provided a more comprehensive exploration of the issue. Consequently this wider perspective on

the usefulness of national guidance was pursued.

METHODOLOGY

A

Qualitative Approach for the Study of Qualitative Issues

The

project aims define the kind of methodology which is appropriate to their

achievement. For example, to

identify what counts as an effective method of assessing competencies and

outcomes, a structural analysis of cases considered to be effective is

required. Before one can begin

this, however, it is necessary to define what is to count as 'effectiveness'. This in turn requires the collection of

views as to what is to count as competence and as outcomes that signify

competence. The initial task then

is to conduct a conceptual analysis of these key terms as they are expressed in

the appropriate professions. Aim

two equally demands a conceptual analysis of the relationship between the key

terms 'knowledge', 'skills' and 'attitudes'. Once this has been established, then it becomes possible to

analyse the structural relationships between assessment procedures and

processes, and the real events in which competence is expressed as a

professional quality. With some

understanding of what is involved in the relationships between the performance

of assessment and the delivery of care then aim four can be explored. The methodology appropriate to

these aims is one which identifies those instances in which the necessary

features of the key terms are exhibited.

Through an analysis of those instances, the structures, mechanisms and

procedures through which effective assessment takes place can be identified and

described in order to facilitate future planning and design. This essentially fits the approach

known as 'theoretical sampling' [2]. It is not a quantitative approach and

thus does not result in percentages and tables which illustrates the

distribution of variables. Rather

it generates theoretical and practical understandings of systems.

The

methodology of the ACE project then, is qualitative, focusing upon structures,

processes and practices as these are revealed through documentation, interviews

and observations. A full

exploration of the methodology can be found in appendix B, but broadly, the

task has been to generate an empirical data base. By a process of comparison and contrast, key groups of

structures, processes and practices are identified as a basis for the more

formal analysis of events.

Alongside

the strategies for the gathering of data and their analysis have been

strategies to ensure the 'robustness' of the data and their

interpretation. These have

included the use of an expert 'steering group', dialogue and feedback with

participating staff and students, theoretical sampling, the application of the

triangulation of perspectives and methods, and reference to research output

from other projects. The

sensitivity of the methodology, with its emphasis on communication and personal

contact has been a feature, and attention to principles of procedure have

facilitated fieldwork relationships.

In summary, methods of

data collection were:

¥ In-depth interviews

(individual and group) with students, clinical staff, educators and other key

people in the assessment process. Recordings of interviews were transcribed for

analysis

¥ Observation of assessment related

events in clinical and classroom settings

¥ Creation of an archive of

assessment related documentation from approved institutions

The result was a large

text based archive constructed from interview transcriptions, observational

notes and documentation of courses, planning groups and official bodies. The method of analysis involved various

strategies of conceptual analysis employing discourse and semiotic approaches

to try to pin down the meanings of particular key terms employed by

professional and student discourse communities. This in turn provided a means of identifying the

institutional, local and national structures necessary for the construction and

delivery of assessment. Structural

analyses could be made of particular approaches to identify the roles and

associated mechanisms and procedures through which events (both intended and

unintended) are effected. These

events in turn were then analysed into their stages, phases and process

features in order to identify what counts as professional competence in action,

in situ.

Whilst gathering and

analysing the data was clearly impossible to understand the experiences of

professionals and students without having grasped the contemporary changes

taking place in nursing and midwifery.

There are thus discourses of reform, of innovation and of change

(whether or not perceived as being innovations or reforms) which act as the

context for the conceptual, structural and process analyses described

above. This context is the subject

of the next section.

THE CONTEXT OF REFORM

Professional and

Educational Change in a Changing World

By 1991, when the ACE

project started its work, a number of significant changes had taken place both

within nursing and midwifery education and within the structures of the

occupational settings of nursing and midwifery. These changes formed part of a

relatively long term strategy for NHS reform which was to continue to develop

and have impact throughout the life of the project. The field of study was and still is characterised by the

complexity of wide variation with differential pace of change across both

regional boundaries and local, internal boundaries. This complexity has been further compounded by the

regularity with which new demands have been made on participating institutions

as NHS reform gathered momentum and concepts such as the regulated internal

market (DoH, 1989a) were tested and

reformulated in the light of experience. Not only has this climate had an

impact on practice and education in nursing and midwifery, but it has also made particular demands

on the research methodology. A

field of study which is in constant state of flux and change demands the

contextualisation of any account of the assessment of competence.

The move of nurse and

midwife education towards full integration with Higher Education institutions

has added further complexity to the situational aspects of the assessment of

competence. Alongside the strategy for NHS reform there has been a parallel movement

towards educational reform which has encompassed the organisation and funding

mechanisms of all Higher and Further Education institutions (DES 1987, 1991). Throughout the study therefore, the fields of

nursing and midwifery education faced two challenges; firstly to prepare

practitioners for workplace environments which were themselves experiencing

major organisational and ideological change; and secondly, as they moved closed

to Higher Education, to contend with the structural changes occurring within

those institutions. Studying

nursing and midwifery education during this period has therefore inevitably raised

a number of issues which speak directly to the more general issues relating to

both the impact of NHS reform and the impact of education reform.

It is the intention here

to make explicit the main areas of change which were already having some impact

at the start of the project and to describe those changes which occurred during

the study period in an attempt to set the scene for the arguments and

recommendations raised in this report.

These areas of change will have inevitably shaped ideas about what

midwives and nurses do, what is expected of them, their educational needs and

the ways in which competence is defined and assessed.

NHS Reform

Since the publication of

the government white paper ÔWorking for PatientsÕ (DoH, 1989a), the pace of change within NHS service provision

has been relentless, and the impact of the subsequent legislation

inescapable. ÔWorking for

PatientsÕ arose as part of a major review of NHS provision and was to provide

the impetus for extensive NHS reform during the 1990Õs. The NHS and Community

Care Act 1990 was the statutory instrument which finally placed firmly into

legislation, reforms which were to have far reaching and on-going impact on

virtually all aspects of health service provision.

One of the central

stated arguments for reviewing NHS provision, structure and funding has been

the need to find economic and

ideological solutions to identified changes in health needs of the population. Demographic and

epidemiological trends (HAS 1982,

DoH, 1989b) have created new demands on

health provision and have influenced recent moves towards a demand-led rather

than service-driven health care economy.

ÔWorking for patientsÕ

attempted to address the challenge of creating provision on the basis of

population need rather than the presence of clinical expertise, by creating a

regulated internal market where Health Authorities purchase services on behalf

of their population from a range of potential service providers. The creation

of this market has rearranged local provision from a single resource into

several separate and semi autonomous units.

The period of fieldwork

undertaken in this study spanned two years of intense activity in relation to

the recommendations imbedded in ÔWorking for PatientsÕ. The first NHS Trusts

were approved in 1990 and throughout the study many of the clinical areas served

by colleges of nursing and midwifery had gained Trust status or had

applications in progress. This

separation of purchasing activity from

services and the division

of local provision not only

presented challenges for the management of the research especially in terms of

access to clinical areas, but was evidenced in the data in terms of concerns

about availability of student placement areas, workload of clinical staff and

the potential for even greater variation in the expectations about the outcomes

of nursing and midwifery courses.

The Changing Roles of

the Nurse and Midwife

Any change in the

demands which are placed on nurses and midwives within their occupational roles

will have an impact on what counts as professional competence and on the way in

which competence is assessed.

The Strategy for Nursing

(DoH, 1989b) described a range of strategic

targets for nursing and midwifery.

These responded to changes which had already occurred in service

provision and professional practice and anticipated the demands on nursing and

midwifery into the next century.

Already nurses and

midwives faced a number of initiatives over the previous decade which would

have direct impact on their role and practice. For example the Griffiths Report

(DHSS, 1983) had introduced general

management to the health service and the unquestionable right of nurses or

midwives to hold senior generic management positions in hospitals and the like

was gone. This left a major gap in opportunities for career advancement outside

clinical practice. 1988 saw the achievement of two major initiatives which were

intended to raise the value of clinical practice and provide opportunities for

career progression through, on the one hand, a new clinical grading structure

and on the other, Project 2000 and academic accreditation of nursing and

midwifery courses. These suggest a

trend towards a changing ideology and value base within nursing and midwifery

and a re conceptualisation of professional role and status in relation to other

health care workers. For

midwives in particular the last decade has seen continuation of the strong

movement away from their traditionally close identification with nurses and

nursing practice. It is a clear

reflection of the dynamic and changing nature of the field of study that by the

time the ACE fieldwork was complete, a major revision of the Strategy for

Nursing had taken place to take account of other fundamental changes within

service provision (DoH, 1993).

Other ideological

changes were taking root within nursing and midwifery practice. Throughout the

1980Õs increasing emphasis has been placed on community care (DHSS 1986, DoH,

1990) based on the notion that care in the

clients normal everyday surroundings is of greater benefit than

institutionalised care. For many community midwives this has meant less

emphasis on high technology births and more emphasis on the individual needs of

women and their families. Changes

in the location of care have had

significant impact on nurses and nursing practice. Under The NHS and Community

Care Act 1990, responsibility for community care was invested in Social

Services rather than the Health Service (DoH, 1990)

and questions are being raised about both the role and competence of nurses in community

settings, and the extent to which health care should, or indeed, could be

separated from social care. This

change in location of care has created different demands not just in relation

to the skills required by nurses in community settings, but also in the demands

on nurses in hospital settings where patients require acute care over shorter

periods.

In similar vein there

has been an increasing orientation within nursing towards holistic care, the

prevention of ill health and health education. Midwives have always worked predominantly with healthy women and as a result have

perhaps been better placed to reject a sickness oriented model of care and

adopt an approach centred on health, normality and education. This trend towards a health orientation

has mirrored a national concern for health and health promotion over recent

years. The Health of the Nation (DoH, 1992),

described the governments policy and strategic targets in these areas, and

reinforced the demand on nurses and midwives towards curricula which were

firmly based within a framework of health as well as ill health.

Changes have also

occurred in the delivery of care. For more than a decade the trend has been to

move away from task-based routinised systems of care to more individualised,

client centred approaches. Primary

nursing and team nursing started to spread throughout the country and the

publication of the Patients Charter (1991)

formally introduced the concept of the Ônamed nurseÕ for each patient. It can be argued that individualised

care, primary nursing and the concept of the named nurse have contributed

significantly to a shift towards a model of nursing and midwifery practice in

which judgement, assessment, care planning and reflective critical analysis are

becoming increasingly valued role components. Where role expectations and

values shift, so too should ideas about what counts as competence and how that

competence should be assured. A

major question therefore must be, to what extent have role expectations and

values embedded in those

expectations, kept pace with changes in policy and legislation? To what extent

do practitioners, managers and educators, hold onto role expectations which

have not yet taken account of major policy shift? The implication here for the

research is to uncover and explicate the relationships between role expectation

and policy implementation in order to inform possible mismatches between the

rhetoric of assessment documents and the realities of assessment experience.

Changes within

Education

Although apparently less

directly affected by the main thrust of NHS reform, professional education has

been in the process of a fundamental transformation. Major changes were taking place within nurse and midwifery

education both in terms of the nature and content of educational programmes and

in the structure and organisation of institutions. A subsidiary paper of

ÔWorking for PatientsÕ, ÔWorking Paper 10Õ, addressed the need to separate

education provision from service units and purchasing authorities by investing

the relationship between service and education with similar market

processes. What followed was a

wholesale review of nurse and midwife education across the country and

consequent major reorganisation. At the beginning of the ACE project most

education institutions had already undergone some form of rationalisation. All approved institutions involved with

the study were the products of the amalgamations of several small schools of

nursing and midwifery, which had traditionally been located on NHS hospital

sites into much larger colleges of nursing and midwifery. Most were therefore multi-site

institutions which were in various stages of incorporation.

Later, as the overall

intention to embed nurse and midwife education into a HE framework took shape,

colleges of nursing and midwifery were to begin the process of wholesale

integration with HE institutions. During the period of study, colleges were in

various stages of integration ranging from validation-only arrangements through

to full integration.

Clearly, given the

overall trend towards integration with HE, all fieldsites were experiencing

major upheaval in terms of both organisational structures and working

arrangements hard on the heels of one, if not more, previous periods of

re-organisation. In one college, senior staff were facing the prospect of re

applying for their jobs for the third time in a space of two years.

Concurrent with these

various strands of organisational restructuring, fundamental changes were being

implemented to the nature of courses. Project 2000 (UKCC 1986) and moves towards devolved continuous assessment

were having a dramatic impact on the nature of pre registration nursing courses

as were the increasing number of direct entry midwifery courses and the

accreditation of midwifery courses to the level of Diploma in Higher Education.

Project 2000 represents

a major move away from the apprenticeship style training of previous years. One

of its fundamental and over-riding stated aims is to provide nurses with the type

of preparation which will best meet the changing demands and expectations on

qualified nurses in changing contexts of health care delivery. If nurses are to cope with a working

environment characterised by its changeability and ideologically committed to the primacy of the individual,

then nurses will need new skills to be flexible and adaptable enough to manage

the unpredictability of individualised systems of care within a constantly

changing professional context.

These are the skills most frequently associated with HE. Colleges of nursing have therefore been

required to form collaborative links with HE institutions in order to develop

and validate Project 2000 courses.

The process of conjoint validation between nursing professional bodies

and HE institutions placed

different and sometimes competing sets of demands in relation to course

assessment strategies. On the one

hand professional bodies were concerned that assessment strategies were

sensitive to the demands of professional practice and on the other the HE

institutions concerns focused on academic credibility and the extent to which

the assessment design was adequately sensitive to intellectual competence.

Although midwifery

education remains separate from Project 2000, a number of direct entry midwifery programmes share

components with the Project 2000 Common Foundation Programmes. Even where Project 2000 has not had

such a direct impact on midwifery education, there has been a parallel trend

within midwifery to incorporate some of the more generic educational principles

of Project 2000 within their own curricula.

Project 2000 and diploma

level midwifery education are only one aspect of a broader set of educational

initiatives which challenge traditional expectations of what nurses and

midwives do, how they interpret their roles and how they should be prepared for

practice. PREPP (UKCC, 1990) and the ENB

framework and Higher Award (ENB, 1990)

address the increasing concern for opportunities for lifelong learning. They imply a distinct move away from a view that nursing or

midwifery can draw on discrete, finite and stable sets of knowledge and understanding and move towards the

notion that maintaining professional competence is more to do with providing

skills for continual self development.

Central to these initiatives is the need to demonstrate evidence of

continual progression and learning in order to be considered fit and competent

to practice.

Changes to the

structure, content and philosophy of nurse and midwife education were not

occurring in isolation from wider changes which were impinging on HE and FE

throughout the period of study (DES, 1987, 1991).

Recent legislation, (DES, 1992) has brought about a number of changes in the

Higher Education institutions into

which nurse and midwife education continues to integrate. These changes were heralded by the

government as:

far reaching reforms

designed to provide a better deal for young people and adults and to increase

still further participation in further and higher education.

(Lord Belstead,

Paymaster-General, Hansard, H.L. Vol. 532, col. 1022)

Changes to HE included a

new system of funding (DES, 1988), which

merged the functions of the old Polytechnics and Colleges Funding Council and

the Universities Funding Council to form the Higher Education Funding Council.

The intention behind this was to introduce greater competition between HE

institutions for both students and funds in order to achieve greater cost

effectiveness. The act also created opportunities for a wider range of HE

institutions to award their own degrees and to include the term

'university' in their titles. The impact on some institutions was

experienced as a series of priority changes as the pace of these changes gathered momentum throughout

1992. For institutions seeking to

meet the criteria set by the Privy Council to gain university status, the main

priority was experienced as a pressure to develop, market and deliver HE

courses to increasing numbers of students. Once achieved, many 'new

universities' faced new demands for increased research activity in order to

benefit in any substantial way from the research assessment exercise which was

to determine the allocation of university research moneys.

Although the effect of

these changes on the project fieldwork was not as direct nor dramatic as the

effect of NHS reform, several colleges involved with the study had HE partners

who were undergoing fundamental changes as a direct consequence of the above

legislation. Some colleges

involved in the study started

their integration process with polytechnics who have since gained university status. For colleges of nursing and midwifery

these changes were not just about nomenclature but were also about the nature,

structure and expectations of their relationships with HE validating body and

partner.

In summary, during the

period of study a number of pressures upon both understandings and

administration of assessment of competence were in operation and which can be

categorised into the following groups:

¥ changes in population

health needs

¥ values about health

care and service provision

¥ political/ideological

changes (structural changes)

¥ educational reform

Each category exerts its

own distinct range of changes and pressures upon individuals and groups

involved in the assessment process on both personal and professional levels,

affecting what counts as competence and the means by which it should be

assessed. Consequently this

section concludes with a selection of extracts from the data which articulate

some experiences of the changing context. Further examples can be found

throughout this report.

THE EFFECTS OF CHANGE

ÔON THE GROUNDÕ

Individual

Experiences of Change

The research examines

the assessment of competence in nursing and midwifery education within the

changing context described above. It does so from the perspective of the

individuals who deliver the service, upon whom these changes impinge directly,

but who also, as members of a body which has campaigned for a considerable time

for the changes, the motivators of the continuing developments. As affectors

and affected, people experience change with mixed feelings, which the research

has set out to capture. For some, the effects of changes within educational and

health care environments are experienced as a continual break on educational

planning:

The Health Authority was in a state

of flux and there was a lot of change going on. First we amalgamated with another Health Authority and then

second we amalgamated as one college of nursing with other schools of nursing. So every time you thought,

"Now we've got some ideas coming on paper," you had to stop and

re-evaluate because you got new schools joining and then you had to look at

what they were doing.

Organising and

guaranteeing a range of clinical experience for students on placements is also

difficult in some instances:

I find the clinical

areas are changing their speciality month by month. You know you have one area that's doing so and so (...) and

then you find that they're no longer doing that because some other consultant

has actually gone in there and they're doing something else. It's a constant battle, it really is. (educator)

A prevailing climate of

uncertainty makes long term planning difficult and unsettling in many

instances:

The whole future's up

for grabs. The college may become

an independent (...) it may become completely separate, someone may take on a

faculty of nursing in Middletown.

The next six months should give some indication of...politically...of

how things go. (educator)

The cumulative effect of

change was highlighted by one educator:

I think it's...not

just how it's changed, it's the speed of change. There is more coming on, you just get one set of initiatives

finished and then there's another set going through, and another set. And on top of that there's changing the

curriculum...there's changes, it's the speed of change. Change has always been there but

there's been more time to assimilate it, to take it out there to work out there

to change it. Now it's so hard to

keep up with the change and take it out there. And a lot of people out there in

the clinical field are not really sure what is going on.

Those involved in

education are keen to ensure that colleagues in patient care are kept up to

date with educational change.

Likewise the need to share understandings about developments occurring

in service is recognised, but remains a difficult task in a climate of

competing demands:

...I think our staff

here don't always recognise all the great changes that are happening in

education, they see their own changes, changes in technology, the way we're

pushing patients through, reducing patients' stays, the way we are changing our

structures and our ways of working and contracting, and income comes in and goes out. We don't get a budget any more, we have

to earn out income through so many patients we see, and they don't see that the

college have got their own stresses and strains. What the college don't see is perhaps the speed at which

we're moving forwards and the new language. I'm not convinced that my college friends really have an

understanding and grasp of the new NHS.

They have not got a grasp of contracts and earning income through

numbers of patients. (nurse manager)

The report offers a

detailed record of individual perceptions of change and provides an account of

the manner in which these have affected, and are likely to continue to affect,

the implementation and further development of structures, mechanisms, roles,

and strategies for devolved continuous assessment.

CHAPTER

ONE

ABSTRACT

Assessment in general has a

range of purposes, including the formative ones of diagnosis, evaluation and

guidance, and the summative ones of grading, selection and prediction. It is

expected to be reliable, valid, fair and feasible, and to offer what is usually

called, somewhat mechanistically, ÔfeedbackÕ. The assessment of professional

competence has additionally to be able to evaluate practical competence in

occupational settings, and to determine the extent that appropriate knowledge

has been internalised by the student practitioner. Approaches to assessment

which lie within the quantitative paradigm, including technicist and

behaviourist approaches as well as quantitative approaches proper, are suitable

for collecting information about outcomes within highly controllable contexts,

and for collecting information which can be measured, or recorded as having

been observed. Such approaches are inappropriate for assessing the degree to

which the student professional has developed a suitably flexible and responsive

set of cognitive conceptual schema that facilitates intelligent independent

behaviour in dynamic practical situations. Neither do they take account of the

fact that contexts of human work themselves continue to evolve and change, and

that therefore the individualÕs ability to blend knowledge, skills and

attitudes into a holistic construct that informs their practice, is crucial.

Assessment from within the educative paradigm, on the other hand, does do these

things, whilst also acknowledging that assessment itself is an essential

element of the educative process. Educative assessment takes full account of

institutional and occupational norms, and of the fact that there are actual

individuals involved who are not automatons but people who interpret and make

sense in terms of their experience; its structures are generated in response to

those features rather than in contradiction of them. It offers structures,

mechanisms, roles, and relationships that reflect interior processes and take

into account the essential ÔmessinessÕ of the workplace. It does not attempt to

impose a spurious logical order on what in practice is complex. In so doing it

performs a formative function as it performs the summative one. The one does

not follow the other, but happens in parallel. Assessment from the educative paradigm is integral to the

learning process that generates individual development. Competency-based

education stands provocatively on the bridge between the quantitative paradigm

and the educative paradigm, still making up its mind about the direction in

which it should move.

THE ASSESSMENT OF

COMPETENCE: A CONCEPTUAL ANALYSIS

Introduction

The study of the

assessment of competence would seem straightforward if it were not that

considerable controversy and confusion over what is to count as 'competence'

takes place at every level in the system.

One way of beginning the analysis of the 'assessment of competence' is

to ask such questions as:

¥ what function it serves

within a symbolic system or social process

¥ how it is related to other

elements or features

¥ how it is accomplished as a

practical activity

What characterises human

activity is its symbolic dimension.

That is to say, it is not enough just to observe a behaviour or an

action, one has to ask what it means within a complex system of thought and

action. Key concepts are

regulative agents in a system. In

other words, they generate order, they give a pattern to behaviour such that

each element is related to each other element. Every element can be analysed for its function in the

system. Meaning, however, is

not open to inspection like a physical object. What is said is not always what is meant. What one intends to mean is not always

what is interpreted by others to mean the same thing. The intended outcome of an action may have unforeseen

consequences because it has been variously interpreted, or because the system

is so complex it defies accurate prediction.

The intended outcomes of

assessment, for example, are to

ensure that certain levels of competence are achieved so that employers and

clients can be assured of the quality, knowledge and proficiency of those who

have passed. The unintended or

hidden purposes may be quite different.

For example, educationalists have long referred to the 'hidden

curriculum' and its ideological functions in terms of socialising pupils to

accept passive roles, gender and racial identities, their position within a

social hierarchy as well as social conformity and obedience to those in power. [3] Occupational studies in a range of

professions reveal that a similar social process occurs through which students

undergoing courses of education into a particular profession become socialised

into that profession's occupational culture. In studies of police training, for example (NSW 1990) police trainees talk about the gap between the

real world of practice that they experience when on placement in the field and

the lack of 'reality' of their academic studies. Similar, experiences are recorded in studies of nursing and

midwifery (Melia 1987; Davies and Atkinson,

1991). It could be said then that

there are hidden processes of assessment where students are assessed according

to their ability to 'fit in' to the occupational culture. This hidden process may parallel that

of the official or overt forms of assessment. How the two kinds of process interact in the production of

the final assessment judgement is a matter of empirical study. The following chapter will set the

scene for such empirical analyses by exploring alternative approaches to

conceptualising the issues involved in the study of a) assessment, b)

competency/ competence/competencies, and c) assessment of

competency/competence/competencies.

It would be artificial to separate completely these strands in the

following sections. Nevertheless,

it facilitates the organisation of the argument to emphasise each in turn under

the following headings:

¥ The Purposes of Assessment

¥ The Professional Mandate

¥ Approaches to Defining

Competence

¥ From Technicist to Educative

Processes in the Assessment of Professional Competence

¥ Finding a Different Approach

1.1. THE PURPOSES OF

ASSESSMENT

1.1.1. From

Technicist Purposes to Professional Development

Traditionally, the

purpose of assessment is to gauge in some way the extent to which a student has

achieved the aims and objectives of a given course of study or has mastered the

skills and processes of some craft or area of professional and technical activity. The act of assessment makes a

discrimination as between those who have or have not passed and further ranks

those who have passed in terms of the value of their pass. The grade or mark awarded not only says

something about the work achieved but something about the individual as a

person in relation to others and the kinds of other social rewards that should

follow. Eisner (1993) in tracing the relationships between testing,

assessment and evaluation from their origins in the scientific purpose 'to come

to understand how nature works and through such knowledge to control its

operations.' Through the influence

of Burt in Britain and Thorndike in America psychological testing was founded

upon principles modelled upon the mathematical sciences. During the 1960s, however, new

purposes arose : 'For the first

time, we wanted students to learn how to think like scientists, not just to

ingest the products of scientific inquiry'. This required approaches different to the educational

measurement movements:

Educational evaluation

had a mission broader than testing.

It was concerned not simply with the measurement of student achievement,

but with the quality of curriculum content, with the character of the activities

in which students were engaged, with the ease with which teachers could gain

access to curriculum materials, with the attractiveness of the curriculum's

format, and with multiple outcomes, not only with single ones. In short, the curriculum reform

movement gave rise to a richer, more complex conception of evaluation than the

one tacit in the practices of educational measurement. Evaluation was conceptualised as part

of a complex picture of the practice of education.

Scriven (1967) introduced the terms formative and summative

evaluation placing attention not simply upon specified outcomes that could be

'measured' but also on the quality and purposes of the processes through which

attitudes, skills, knowledge and practices are formed. The focus upon the formative

possibilities of evaluation drew attention to the processes of learning,

teaching, personal and professional development and the intended and unintended

functions of assessment procedures.

To address these kinds of processes, methodology shifted from

quantitative to qualitative and interpretative approaches which focused upon

the lived experiences of classrooms.

What was found there was a complexity and an unpredictability that

earlier measurement methods had overlooked.

During the mid-1970s and

to the mid 1980s in America, and broadly the 1980s to the present day in the UK

concern was expressed regarding the outcomes of schooling. There was a general call from

politicians and employers to go 'back to basics'. This call was articulated through increasing political

demands for testing and for accountability. However, as Eisner points out many realised that

'educational standards are not raised by mandating assessment practices or

using tougher tests, but by increasing the quality of what is offered in

schools and by refining the quality of teaching that mediated it.' In short, 'Good teaching and

substantive curricula cannot be mandated; they have to be grown.' Professional development together with appropriate

structures and mechanisms for the development of courses and appropriate

methods of teaching and learning are thus essential.

With the return to

demands for 'basics' and 'accountability' the term assessment has come to

supplant that of evaluation in much of the American literature. However, this term is new in that it

does not simply connote the older forms of testing course outcomes and

individual performance, but includes much of what has been the province of

evaluation. As Eisner concludes

'we have recognised that mandates do not work, partly because we have come to

realise that the measurement of outcomes on instruments that have little

predictive or concurrent validity is not an effective way to improve schools,

and partly because we have become aware that unless we can create assessment

procedures that have more educational validity than those we have been using,

change is unlikely.'

Brown (1990) has described the emergence in British education

of a multi-purpose concept of assessment 'closely linked to the totality of the

curriculum'. The purposes included

are: fostering learning, the

improvement of teaching, the provision of valid evidence bases about what has

been achieved, enabling decision making about courses, careers and so on. The TGAT report on assessment in the

National Curriculum for schools saw information from assessments serving four

distinct purposes:

1 formative, so that the positive achievements

of a pupil may be recognised and discussed and the appropriate next steps may

be planned;

2 diagnostic, through which learning

difficulties may be scrutinised and classified so that appropriate remedial

help and guidance can be provided;

3 summative, for the recording of the overall

achievement of a pupil in a systematic way;

4 evaluative, by means of which some aspects of

the work of a school, an LEA or other discrete part of the educational service

can be assessed and/or reported upon.

In addressing these

concerns, there are four kinds of purposes that need to be considered when

thinking about assessment:

¥ technical,

¥ substantive,

¥ social, and

¥ individual developmental

purposes.

1.1.1.a. Technical

Purposes

The essential technical

purposes of an assessment procedure are reliability, validity, fairness and

feasibility in what it assesses, providing in addition, feedback to student,

teacher, the institution and national and professional bodies ensuring the

quality of the course. To these

may be added the six possible purposes of assessment provided by Macintosh and

Hale (1976): diagnosis, evaluation, guidance, grading, selection, and

prediction.

1.1.1.b.

Substantive Purposes

The substantive purpose

of a nursing or midwifery course includes a grasp of the appropriate knowledge

bases as well as the accomplishment of appropriate degrees of practical

competence in occupational settings.

The question of who should decide what counts as an appropriate

knowledge base and an appropriate level of performance are made more complex

with the advent of local decision making.

At a local level, market forces may lead to the tailoring of courses to

meet local needs. The question

arises then, concerning how national standards, or levels of comparability can

be maintained. A professional

trained to meet the needs of one area may be inadequately trained to meet the

needs in another part of the country.

Substantive issues are thus vital to maintaining a national perspective

not only on professional education but also on professional competence.

1.1.1.c. Social

Purposes

At a social and

political level the public needs to be assured of the quality of professional

education. Assessment in this case

serves the function of quality assurance and can contribute to public

accountability. However, there are

other hidden (even unintended) social functions. To gain a professional qualification means also gaining a

certain kind of social status. It

means taking on not merely the occupational role, but the social identity of

being a nurse, a midwife. With the

role goes an aura of expertise, a particular kind of authority that can extend

well beyond the field of professional activity into other spheres of social

life. On the one hand, it can be

argued that through their authority the professions act as agents of social

control; equally, it can be argued that they act as change agents, raising

awareness of say the impact of unemployment or poverty on health.

1.1.1.d.

Individual Developmental Purposes

Work is still the

dominate social means through which people form a sense of self value, explore

their own potential, contribute to the well being of others and feel a sense of

belonging. Therefore, becoming qualified

to enter a profession marks a stage not only in the social career of the

individual but also in the personal development of the individual. Assessment

is thus, in its widest sense, about human development, purposes and action.

To become a professional

means that the individual has internalised a complex cognitive conceptual

schema to respond appropriately to dynamic practical situations. Knowledge, skills and attitudes blend

in the person to the extent that the individual's identity is bound up with

professional activity. This

is what makes both defining competence and its assessment so difficult to

achieve. It is not just that the

individual is perceived as a professional. The individual is perceived to have a mandate to act.

1.2. THE PROFESSIONAL

MANDATE

A mandate to act can be

defined at one level as having the legal power to enforce an action. A professional mandate, however, is not

limited to this. The mandate arises

because the professional has an authority, a social standing, a body of

knowledge through which change can be effected. Both nursing and midwifery have during this century

undergone changes in status and currently lay claim to a professional

identity.

As recently as 1969

Etzioni regarded nursing as a

semi-professional occupation because training was too short and nurses were not

autonomous nor fully responsible for their decision making. Not only is nursing perceived by many

as subordinate to the medical professions, midwifery is in the process of

distinguishing its own professional identity from that of nursing. Whittington and Boore (1988: 112) following their review of the literature

identified the characteristics of professionalism as:

1. Possession

of a distinctive domain of knowledge and theorising relevant to practice.

2. Reference

to a code of ethics and professional values emerging from the professional

group and, in cases of conflict, taken to supersede the values of employers or

indeed governments.

3. Control

of admission to the group via the establishment, monitoring and validation of

procedures for education and training.

4. Power

to discipline and potentially debar members of the group who infringe against

the ethical code, or whose standards of practice are unacceptable.

5. Participation

in a professional sub-culture sustained by formal professional associations.

Hepworth (1989) refers to the fact that nursing has been

considered variously as an 'emerging profession, as a semi-profession and as a

skilled vocation'. Nevertheless,

at first glance at least, nursing and midwifery could be said to be

increasingly able to meet the above criteria. Hepworth, however, points back to the underlying uncertainty

concerning the status of nursing as a profession and the impact this has upon

attempting to assess students when assessors:

are required to assess a

student's competence to practice as a professional, when the role of that

professional is ambiguous, changing, inexplicit, and subject to a variety of

differing perspectives. The effect

of this complexity is evident in the anxiety and defensiveness which the

subject of professional judgement often raises in both the students and their

assessors/teachers, particularly if that judgement is challenged or it is

suggested that the process should be examined.

Added to this, the

British political context for all the health professions has been, is, and is

likely to be for the foreseeable future, one of considerable change where old

practices and definitions are replaced by new ones, where professionals often feel

under threat and de-skilled by innovations and their demands. In the face of such external pressure,

there has never been a greater need for both nursing and midwifery to reflect

upon their status as possessors of domains of knowledge and theorizing in order

to assert their independence and identities. However, like any complex occupation there is no

homogeneous, all embracing view of 'nursing' or 'midwifery' as the basis upon

which to construct domains of knowledge.

There is rather an agglomeration of spheres each with their own views

and associated practices which broadly assembled come under the name of

'nursing' or 'midwifery' (c.f. Melia 1987).

Project 2000 and direct

entry midwifery diplomas each speak to a change in what may be called their

appropriate 'occupational mandates'. This mandate includes not only the official

requirements as laid down by the ENB, UKCC and EC but also the knowledges,

skills, competencies, values, conducts, attitudes, and images of the nurse and

the midwife in relation to other health professionals that have developed

historically. These

interrelated images, ideas and experiences constitute the concept of the

competent professional.

Change cannot simply be mandated by legislation. The historically developed beliefs and

practices of a profession cannot be altered overnight. Of course legislation can force

changes. Nevertheless, these may

not be in the directions desired.

Official changes can be subverted, resisted, or glossed over to hide the

extent to which practice has not changed.

If real change is desired then it needs to be 'grown' rather than

imposed. Project 2000 and the

direct entry midwifery diploma may be seen as an attempt to grow change in the

professions. In the process,

competing definitions as to competence emerge some of which draw upon

traditional legacies, others upon official pronouncements and legal texts and

yet others upon the personal and collective experiences of practice. Accordingly, professional competence as

a concept is open to variations in definition, many of which are vague.[4]

1.3. APPROACHES TO

DEFINING COMPETENCE

1.3.1. Some

Approaches to Finding a Definition

For Miller et al (1988) competence can be seen either in terms of performance,

or as a quality or state of being.

The first is accessible to observation, the second, being a

psychological construct, is not.

However, it could be argued that the psychological construct should lead

to and therefore can be inferred from competent performance. Hence, the two

definitions of competence are compatible.

The question remains, however, how easily and unambiguously can

performance signify competence?

The breadth of definitions of competence ought to lead researchers to

some caution as to the answer to this question. Runciman (1990) draws on

two broad definitions of competence:

Occupational competence

is the ability to perform activities in the jobs within an occupation, to the

standards expected in employment.

The concept also embodies the ability to transfer skills and knowledge

to new situations within the occupational area .... Competence also includes

many aspects of personal effectiveness in that it requires the application of

skills and knowledge in organisational contexts, with workmates, supervisors,

customers while coping with real life pressures.

(MSC Quality and

Standards Branch in relation to the Youth Training Scheme)

(Competence is) The

possession and development of sufficient skills, knowledge, appropriate

attitudes and experience for successful performance in life roles. Such a definition includes employment

and other forms of work - it implies maturity and responsibility in a variety

of roles; and it includes experience as an essential element of competence.

(Evans

1987: 5)

Although these

definitions offer an orientation towards competence, neither offers sufficient

precision to be clear about how such competence can be manifested unambiguously

in performance. In order to

overcome this, one approach has been to take a strategy of behaviourally

specifying individual competencies in the form of learning outcomes and

associated criteria or standards of performance, the sum of which is the more

encompassing concept of competence.

Thus competence is seen as a repertoire of competencies which allows the

practitioner to practice safely (Medley 1984). This approach is broadly

quantitative. How these

competencies may be identified for quantitative purposes is then the next

problem.

According to Whittington

and Boore (1988) there has been little

research in actual nursing practice thus competencies have generally either

been produced in an intuitive, a priori fashion, or have been based upon

experts' perceptions of what counts as competence (as in the Delphi[5] or Dacum[6] approaches). However, this criticism is being

addressed in the work of Benner (1982, 1983),

qualitative studies such as Melia (1987),

Abbott and Sapsford (1992) and the

increasing interest these kinds of work are stimulating through which an

alternative approach can be developed.

In the final section of this chapter, this alternative approach will be

discussed in relation to its implications for the development of an educative

paradigm through which competent action may be educed and evaluated.

Although it is not the

central purpose of this project to explore methods of identifying competence,

such methods have direct implications for the forms that assessment processes

and procedures take. A predominantly

quantitative approach has quite different implications than a largely

qualitative approach. Norris and

MacLure (1991) provided a summary of

approaches following a review of the literature in their study of the

relationship between knowledge and competence across the professions. For the purposes of this study their

summary has been reframed into two groups together with a minor addition as

follows:

Summary of

methodologies for identifying competence

Group A

¥ brain-storming/round table and

consensus building by groups of experts (eg ETS occupational literature; Delphi

and Dacum);

¥ theorising/model building (based

on knowledge of field/

literature - eg Eraut, 1985; 1990);

¥ occupational questionnaires;

¥ knowledge (conceptual framework)

elicitation for expert systems through

interviewing (eg Welbank, 1983)

¥ knowledge elicitation through

observation in experimental settings;

(Kuipers & Kassirer,

1984) or modelling of expert judgement

via simulated events (Saunders, 1988)

Group B

¥ post hoc commentaries on

practice by expert practitioners (based on recordings/notes/recollections - eg Benner, 1984)

¥ on-going commentaries on

practice (eg Jessup forthcoming);

¥ practical knowledge/tacit theory

approaches (self-reflective study): (Elbaz, 1983; Clandinin, 1985; Schon,

1985).

¥ critical incident survey and

behavioural event interviews (eg McLelland, 1973);

¥ observation of and inference

from practice (based on practitioner-research e.g., Elliott 1991)

[Based on MacLure &

Norris, 1991: p39]

There are those, group

A, which are essentially a priori

and quantitative, seeking measurable agreements and those, group B, which focus

primarily upon the analysis of observation and interview accounts. Group A tends towards the quantitative

paradigm, whereas group B tends towards the qualitative paradigm. In group A, it could be argued that

expert panels include a high degree of qualitative material and in group B the

McLelland approach results in quantitative criteria. Qualitative approaches do not necessarily exclude the use of

quantitative techniques and quantitative approaches frequently depend upon

'soft' or subjective approaches to develop theory for testing. The difference, in each case, is a

difference of value and purpose, the essential difference being that

quantitative approaches more highly value measurement and explanation; where as

qualitative approaches tend to value more highly, meaning and

understanding. The former tends to

reinforce technical (and in the extreme, technicist) approaches to training and

assessment, whereas the latter tends to reinforce the development of personal

and professional judgement. In

this latter approach, both training and assessment demands the provision of

evidence of critical reflection on practice in which appropriate judgement has

been the key issue. Since

judgement is context and situation specific it cannot be reduced to behavioural

units but can be open to public accountability through the discussion of

evidence.

The issue for assessment

concerns the nature of the

domain(s) of knowledge and theorizing relevant to the sub-spheres of practice

that is possessed by competent nurses and midwives and which is essential to

marking them out as professions. It may be an argument for their status as

emergent professions rather than as fully fledged professions, that much of

their knowledge is held implicitly or tacitly. Or, it may be that such tacit knowledge is characteristic of

any profession. In either case,

what approaches to the identification of competence and its assessment are

appropriate to such complex fields of action?

1.4. FROM TECHNICIST

TO EDUCATIVE PROCESSES IN THE ASSESSMENT OF PROFESSIONAL COMPETENCE

1.4.1.

Differentiating Quantitative and Qualitative Discourses

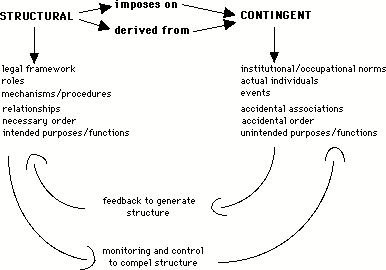

A formal assessment

process requires both a social apparatus of roles, procedures, regulations, and

also a conceptual structure adequate to generate evidence upon which to base

judgements on student achievements.

A structure to make this happen can be logically, even scientifically,

formulated. However, the actual

events that take place as a result may not always be those expected. Events are contingent whereas structures

may be rationally determined or legally imposed. Where a role may be rationally defined and related to other

roles in a clear structural pattern, the individual who occupies that role is

contingent in the sense that it is the role that is necessary to the structure

not the individual. Each

individual who could occupy a given role

brings different individual needs, interests and aspirations as well as

abilities, values and experiences which frame how in practice the role is

interpreted and realised. Broadly,

the assessment process can be analysed according to such structural and

contingent aspects. In the

development of an assessment structure, the issue is whether the structural

aspects are to be imposed upon, or to be derived from actual practice.

In one sense, the

process of assessment can be read as an attempt to impose a logical order on

the 'messy' reality of actual practice.

Its purpose would be to control or regulate processes through well

defined mechanisms and procedures to produce outcomes which ensure some

comparability and to assure certain standards of quality or attainment. In the second sense, assessment structures,

mechanisms and procedures are seen as outcomes generated by reflective feedback

on practice. Through

reflection structural or common features of practical competence are identified

but not to the detriment of specificity, difference and variety. The second is thus sensitive to the

dynamics of situations in a way that the first is not.

Generally speaking, quantitative

methods are typically employed in

approaches which seek to control and hence compel the adoption of a certain

kind of structure. The

alternative approach which seeks to generate (or grow) structure based upon

reflection upon practice, places at the centre of its arguments concepts of

'value', 'meaning' (as distinct from observable and measurable units),

attitudes, judgement and other personal qualities - a qualitative

approach. The latter approach thus

places human action, reflection

and decision making at the centre of its discourses whereas the former

replaces the human decision maker by instruments which are constructed to measure

or calculate and thus reduce 'human judgement' which is seen as a source of

potential error.

Each has quite different

implications. Firstly, there are

implications both for the way education to enter the professions is organised,

and also for the legitimation of and status of a profession in relation to its

client groups and its employers.

Secondly, there are implications for the principles, procedures and

techniques of assessment. For the

sake of convenience, the first group of discourses about competence will be

referred to as the quantitative, and the second as the educational. The term educational or educative is

chosen so as not to reinforce the easy opposition between mathematical

approaches and qualitative approaches in the social sciences. Where a quantitative approach in the

interests of 'objectivity', may seek to exclude discourses of value, judgement

and human subjectivity, an educational approach values all the power of

precision that mathematics and logical forms of analysis can contribute to the

full range of human discourse, judgement and action. In this sense, the educative approach is inclusive and

action centred, whereas the quantitative approach is exclusive.

1.4.2. The

Quantitative Discourses

(With Particular Reference To

The Behavioural and Technicist Variants)

Assessment should not

determine competence, but rather competence should determine its appropriate

form of assessment. How competence

is defined depends upon the methods, beliefs and experiences of the professional. Such definitions can be revealed

through the kinds of texts they produce and the ways in which they talk about,

support and contest meanings of competence. The definitions that emerge or can be drawn out (educed)

from the range of texts and discourses provide accounts of how practical

competence is seen. Within these

discourses, it is frequently the case that quite distinct, even mutually

exclusive views can be described.

To mark such distinctions the term paradigm is often used.

The term 'quantitative

paradigm' as to be employed here, refers to those discourses of science which

involve throwing a mathematical grid upon the world of experience. Logical deductive reasoning,

measurement and reduction to formulaic expressions are its features. The technicist paradigm as employed in

this report is a particular kind of version of the more general quantitative

paradigm which seeks measurement, observable units of analysis and logical

arrangements. The technicist

paradigm is reduced in scope in that it takes for granted its frameworks of

analysis and its procedures and employs them routinely rather than subjecting

them to the judgement of the practitioner. Although this characterisation is an 'ideal

type' it has a basis in the data.

Later discussions will report the sense of frustration some assessors

and students feel in filling out assessment forms, in trying to interpret the

items in relation to practical experience and in accordance with their best

judgement. The typical complaint

may be summed up as being that the key dimensions of professionality cannot be

reduced to observable performance criteria[7].

The aims of the

technicist paradigm can be seen most clearly in the 'scientific management' of

Taylor (1947[8]) and the developments

in stop watch measurement of performance, the behaviourism of Watson (1931) and later Skinner (1953, 1968), the mental measurement of Burt (1947),

Thorndike (1910) and Yerkes (1929) and

programmed learning and instructional design (Gagn 1975;). Here the emphasis

was upon control and predictability through measurement and the reinforcement

of appropriate behaviours to produce desired outcomes.

In making such a

reduction, the technicist paradigm reinforces a split between theory (or

knowledge) and practice by separating out the expert who develops theory

(knowledge) from the practitioner who merely applies theory that can be

assessed in terms of performance criteria. Also implied in this is a hierarchical relation between the expert and the non-expert

whether seen as practitioner or trainee.

In addition, within a quantitative/technicist paradigm, skills

assessment models, and competency based education each assume the student

initially lacks the required skill or competence. Through training a student then acquires the particular

skill or competence required. A

particular combination or menu of such skills or competencies then defines the

general competency of the individual.

This is most clearly expressed by Dunn et al in a medical context

(1985:17):

... competence must be

placed in a context, precise and exact, in order for it to be clear what is

meant. To say a person is

competent is not enough. He is

competent to do a, or a and b, or a and b and c: a and b and c being aspects of a doctors work.

The essential

'messiness' of everyday action, the complexity of situations, the flow of

events, and the dynamics of human interactions make the demand for a context

which is 'precise and exact' unrealistic.

The operationalisation of such an approach is exemplified in the

programmed learning of Gagn (see Gagn and Briggs 1974) or in the exhaustive and seemingly endless lists

of Bloom (1954, 1956). The issue raised at this point is not

about the value of analysing complex activities and skills, but the use to

which such analyses are put in everyday practice.

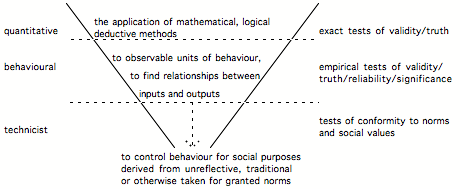

Schematically the

relationship between the quantitative view, the behavioural and the technicist

can be set out as follows:

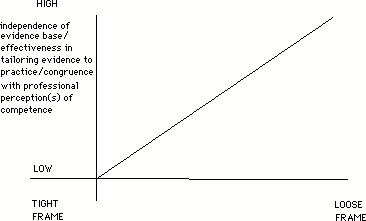

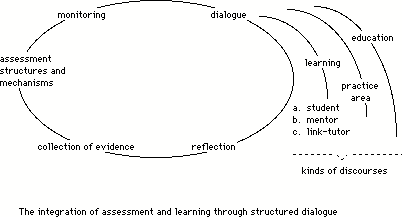

(Figure 1)

(Figure 1)

The diagram represents

the decreasing scope from quantitative to technicist which moves from a

systematic method of investigating and comprehending the whole world of

experience open to thought, to the reduction of scope to observable behaviours

(as opposed, say, to felt inner states) and finally the reduction of methods and

knowledge for limited purposes of social control or the engineering of

performance. Thus, in general

terms, as Norris (1991) comments, discourse

about competence:

has become associated

with a drive towards more practicality in education and training placing a

greater emphasis on the assessment of performance rather than knowledge. A focus on competence is assumed to